- Developing real-time EMS triage pipeline using multimodal AI for trauma prediction.

- Collaborating with surgeons on AI-assisted decision support systems.

Chi-Sheng (Michael) Chen 陳麒升

I am a Research Affiliate at Harvard Medical School and Beth Israel Deaconess Medical Center (BIDMC), and Co-founder of Omnis Labs, an AI-driven DeFi liquidity positioning protocol.

My research focuses on multimodal AI systems for medical/clinical application, spanning clinical biosignal processing, speech and language understanding, and quantum machine learning. Previously, I was an AI Trainer at OpenAI, CTO of Neuro Industry, Inc. and a Digital IC Design Engineer at MediaTek.

I hold an M.S. in Computer Science & Biomedical Engineering (NTU BEBI, College of EECS) from National Taiwan University, a B.Eng. in Physics (NCTU Electrophysics, College of Science) and a B.S. in Interdisciplinary Science (NCTU ISDP; Electrical Engineering, Life Sciences & Applied Mathematics) from National Chiao Tung University (now National Yang Ming Chiao Tung University).

I am seeking PhD opportunities in classical/quantum machine learning for clinical biosignals and foundation models, starting Fall 2026.

You can contact me at: cchen34 [at] bidmc.harvard.edu | m50816m50816 [at] gmail.com | chisheng.m.chen [at] gmail.com

News

- 🎖️ May 2026: Recognized as Gold Reviewer at ICML 2026, placing among the top reviewers based on area chair ratings.

- 🎖️ Apr 2026: Invited to serve on the Program Committee for the QNRL Workshop @ IEEE WCCI 2026.

- 🎖️ Apr 2026: Recognized as IOP Trusted Reviewer by the Institute of Physics Publishing for quality and commitment in peer review.

- 🏆 Mar 2026: Our poster "GPU-Accelerated Chinese Brain-to-Text Interface Powered by NVIDIA H100 80GB" selected as Top 8 Finalist at NVIDIA GTC 2026.

- 🏆 Feb 2026: Our team MadGAA achieved 3rd Place in Healthcare Agent at the Berkeley RDI AgentX-AgentBeats competition (Phase 1, 1,300+ teams worldwide). [Project]

- 🚩 Mar 2026: Two posters presented at NVIDIA GTC 2026 — "GPU-Accelerated Chinese Brain-to-Text Interface Powered by NVIDIA H100 80GB" (Top 8 Finalist) and "Accessible Quantum Reinforcement Learning for Finance: Benchmarking on NVIDIA RTX GPUs."

- 🚩 Jan 2026: Two papers accepted at IEEE ICASSP 2026 — "Quantum Reinforcement Learning-Guided Diffusion Model for Image Synthesis" (oral presentation) and "Quantum Adaptive Self-Attention for Financial Rebalancing: An Empirical Study on Automated Market Makers in Decentralized Finance" (oral presentation).

- 🚩 Aug 2025: Two papers accepted at IEEE GLOBECOM Workshop 2025 — "Q-DPTS" and "Benchmarking Quantum and Classical Sequential Models."

- 🚩 Jun 2025: Paper accepted at IEEE QCE 2025 — "Quantum Reinforcement Learning Trading Agent for Sector Rotation."

- 🚩 May 2025: Paper accepted at IEEE CIBCB 2025 — "Enhancing Clinical Decision-Making."

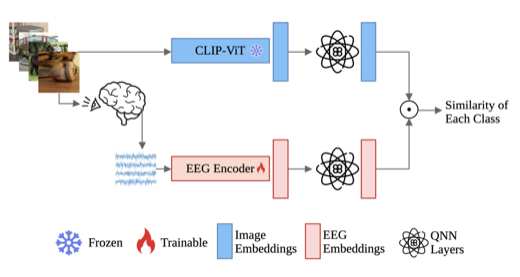

- 🚩 Jan 2025: Paper accepted at IEEE ICASSP 2025 — "Quantum Multimodal Contrastive Learning Framework" (oral presentation).

Research Vision

I aim to build clinically deployable AI systems that bridge quantum computing, multimodal learning, and emergency medicine. Modern clinical environments generate rich, heterogeneous signals — EEG, audio, imaging, text — yet real-time decision support remains fragmented. My work addresses this gap through two complementary directions: (1) designing hybrid quantum-classical architectures that capture complex temporal dependencies in biosignals, and (2) engineering end-to-end multimodal pipelines that integrate speech, language, and physiological data for trauma triage and psychiatric treatment prediction. A core principle of my research is clinical translation: my EEG-based models are already serving real patients at the Precision Depression Intervention Center in Taipei. Beyond healthcare, my time-series methods have been validated in DeFi market-making, achieving 50%–120% base fee APR in production.

Selected Publications

Research

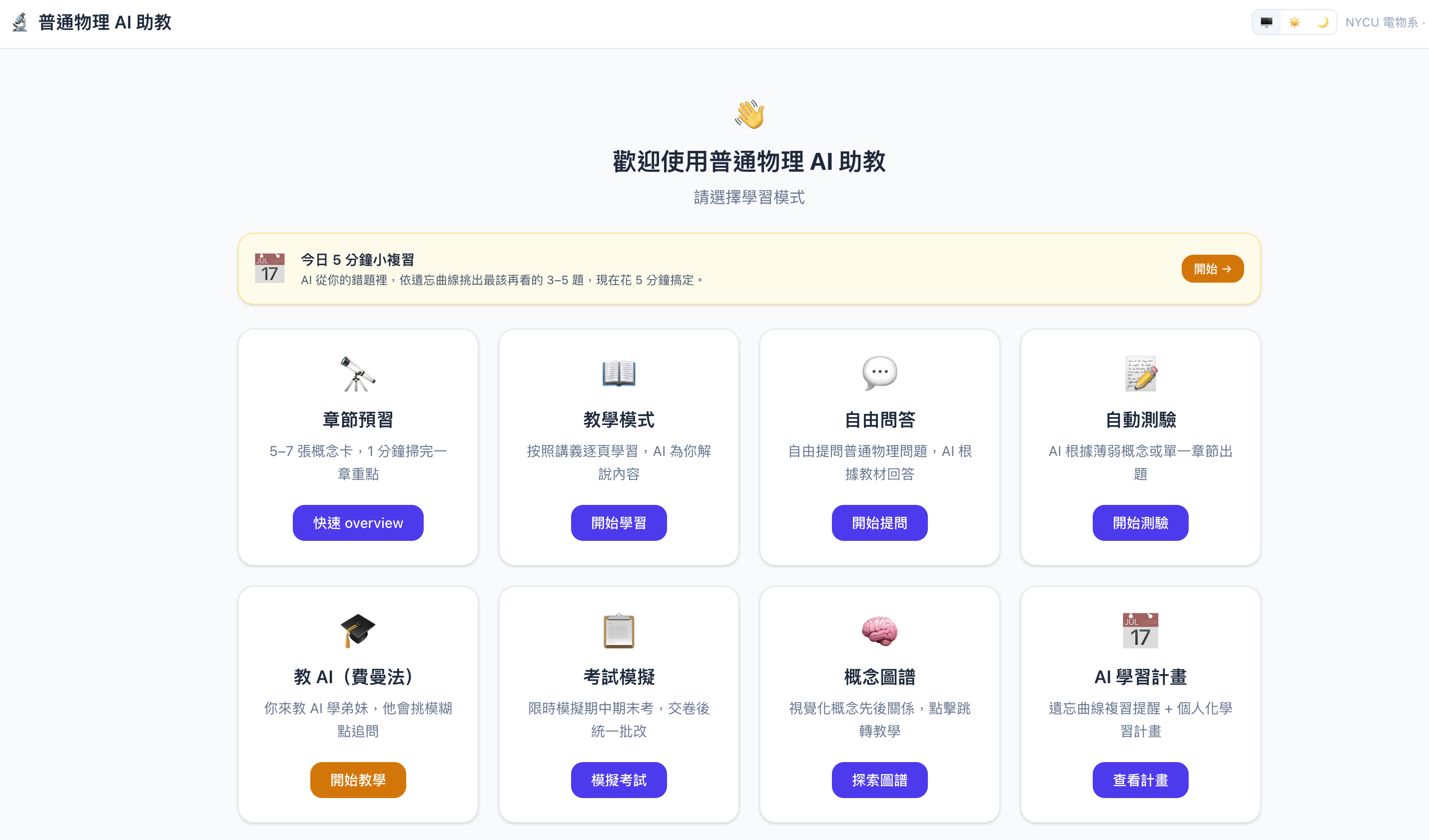

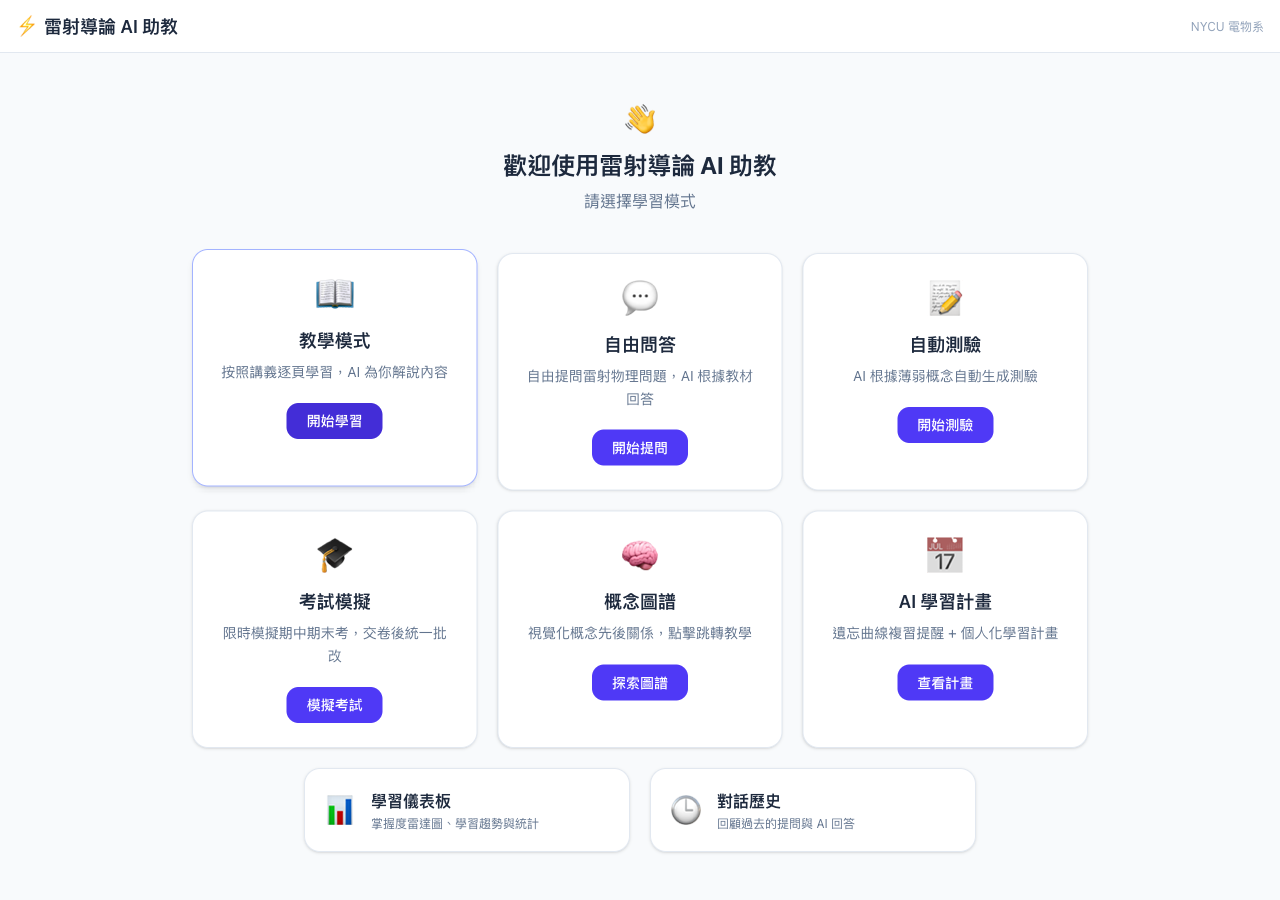

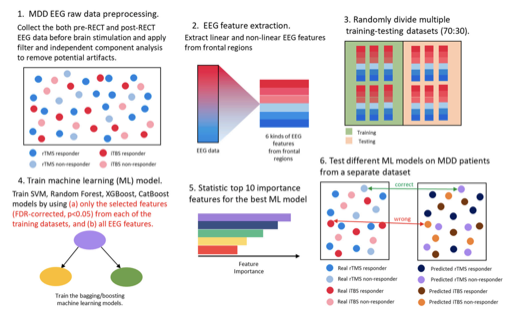

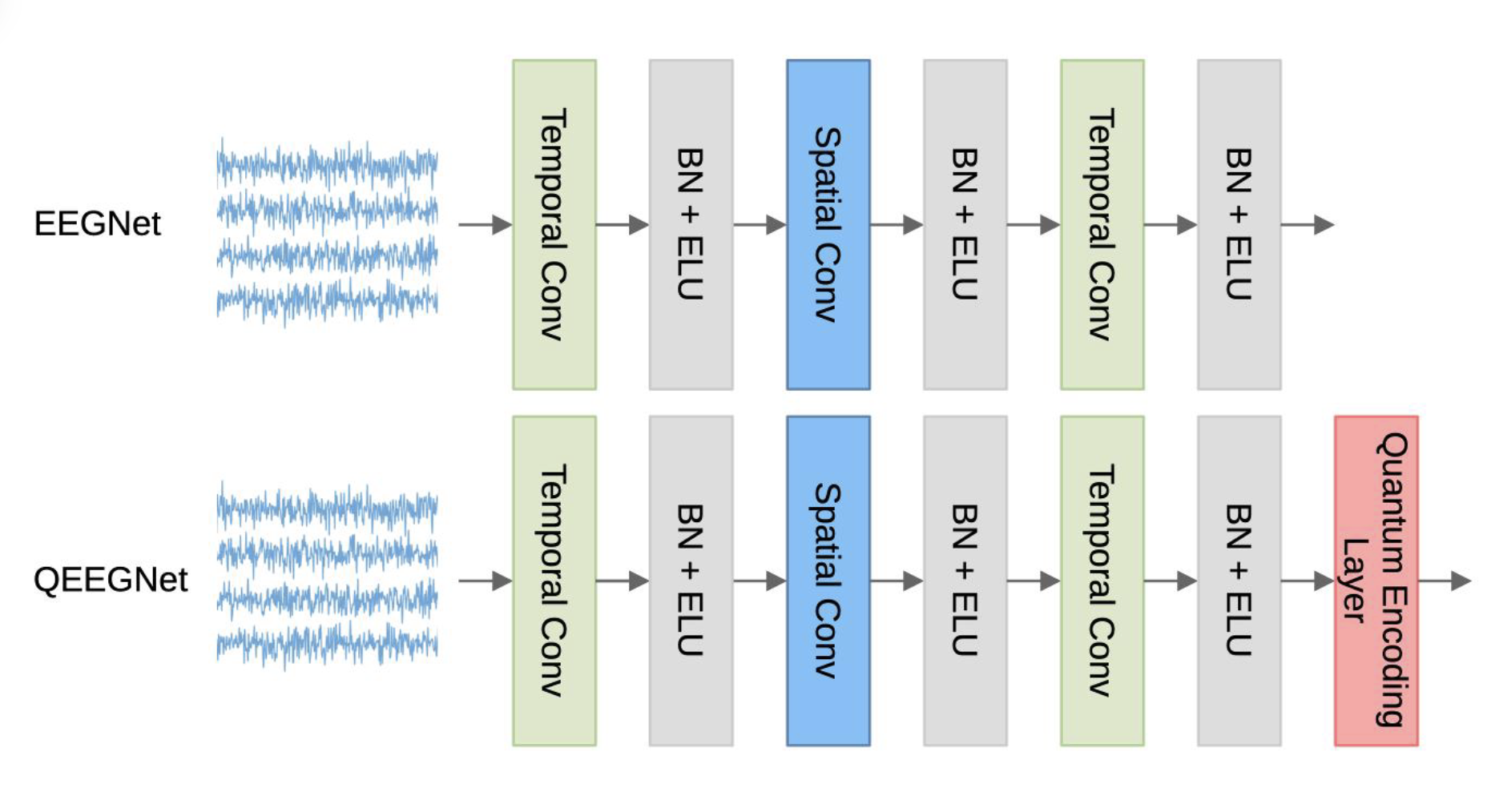

Clinical AI & Biosignal Processing

Developing AI systems for clinical neuroscience and emergency medicine.

My EEG-based depression treatment prediction models have been Clinically Deployed at the

Precision Depression Intervention Center (PreDIC)

at Taipei Veterans General Hospital, serving real outpatient patients.

At Harvard/BIDMC, I am building real-time EMS triage pipelines using multimodal AI for trauma prediction,

collaborating with surgeons on AI-assisted decision support systems.

I also develop multimodal contrastive learning methods for EEG-image alignment, such as MUSE

Speech & Language Processing for Healthcare

Building speech and natural language processing systems for clinical settings, including EMS audio transcription, automated clinical documentation, and emergency page generation for trauma prediction workflows.

Quantum Machine Learning

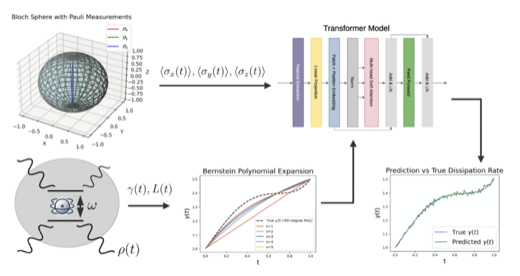

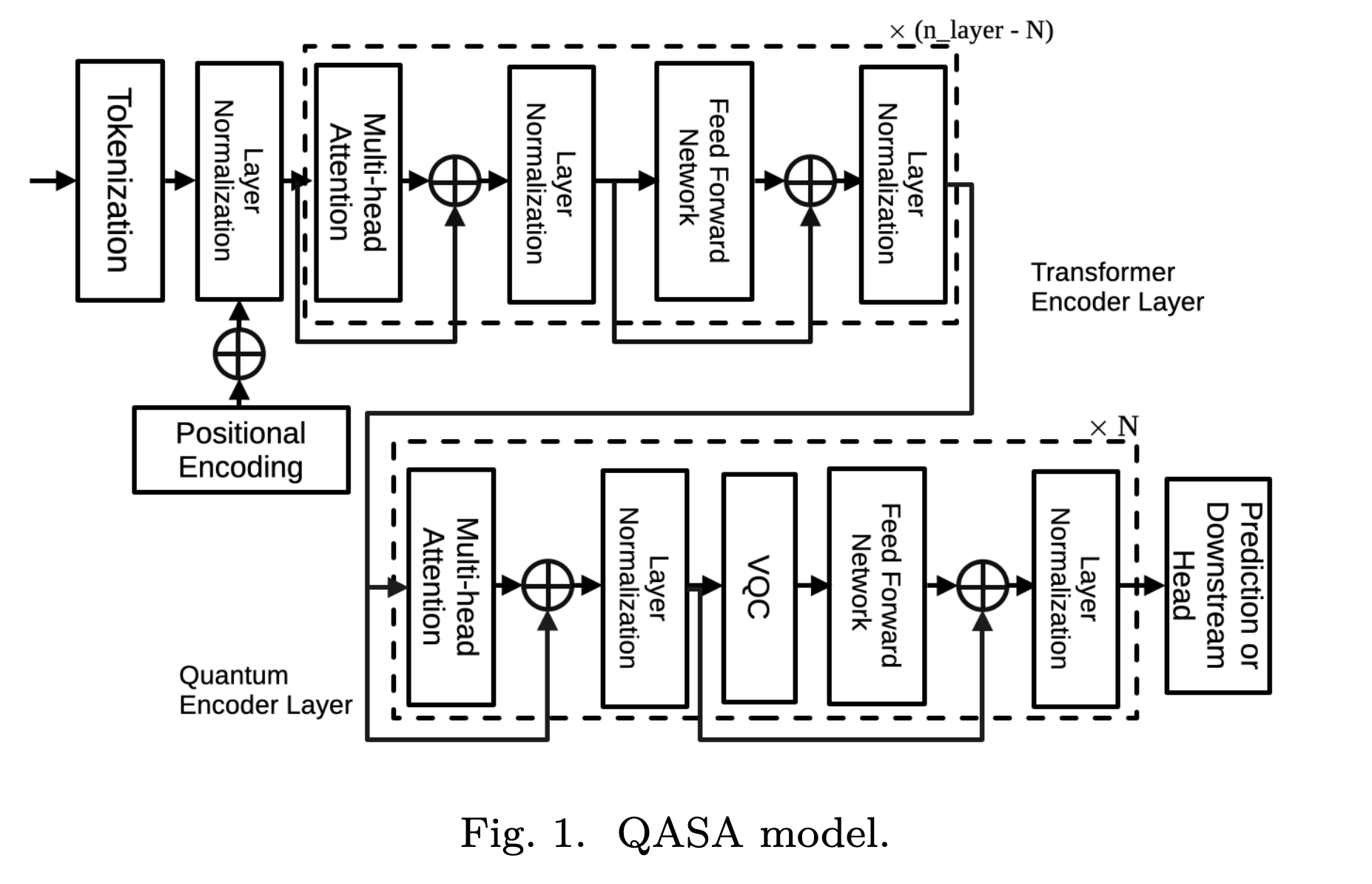

Designing hybrid quantum-classical architectures for time-series and sequential data,

including the Quantum Adaptive Self-Attention (QASA)

Computer Vision

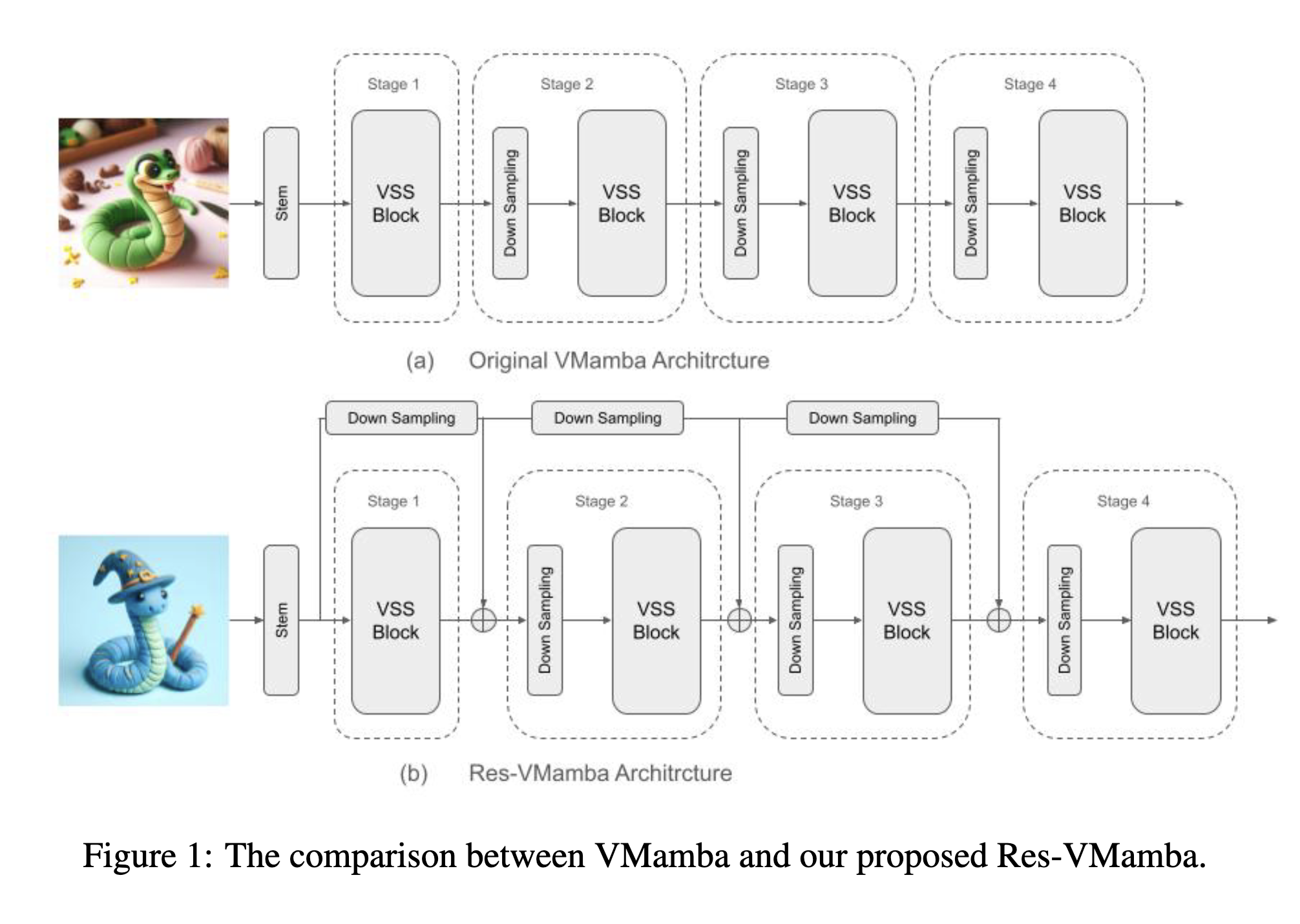

Applying deep learning to visual recognition tasks, including surgical instrument detection for intraoperative safety,

and fine-grained food classification with foundation models such as Res-VMamba

Time-Series Classification & Forecasting

Developing interpretable and geometric deep learning methods for time-series analysis, including FreqLens for frequency-domain attribution in forecasting and SPD Token Transformers for EEG classification with Riemannian geometry. Applications extend to DeFi yield prediction and urban telecommunication forecasting, as well as Transformer-assisted learning in open quantum systems (Lindblad dynamics). My time-series forecasting models have been Deployed in Production for DeFi AMM liquidity strategies, achieving 50%–120% base fee APR on major trading pairs.

Research Experience

- Built GCP-based MLOps pipeline, enabling training on 10K+ EEG samples.

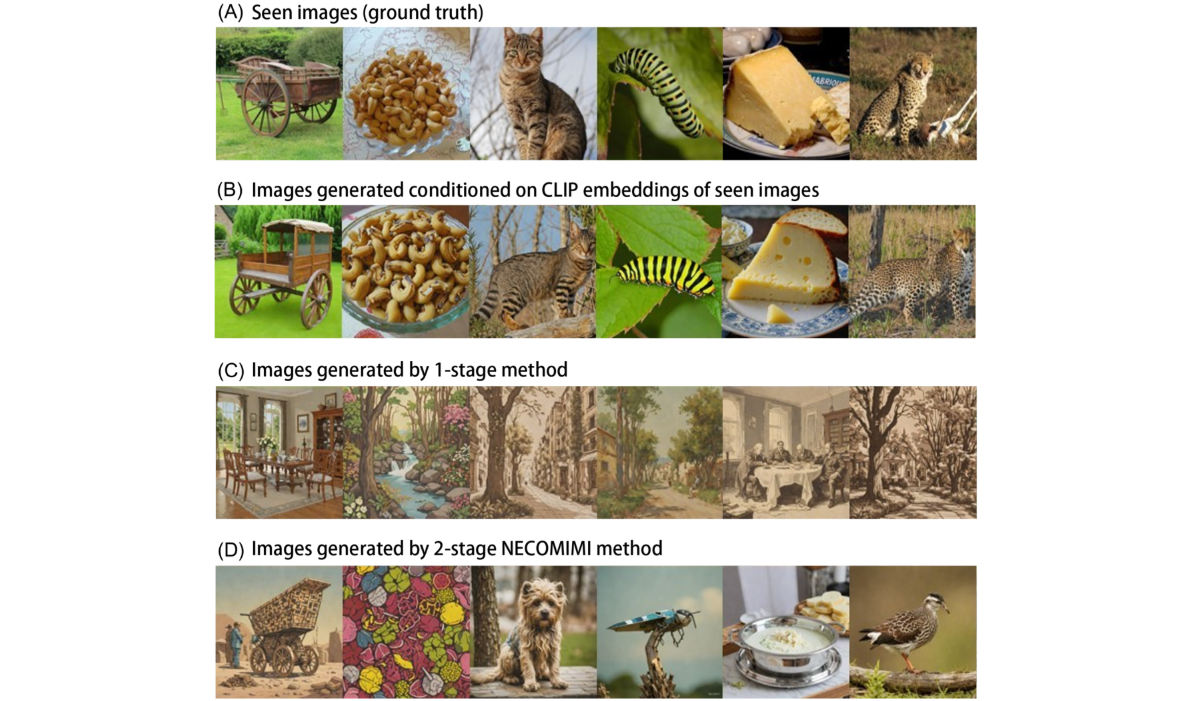

- 2 research papers on quantum machine learning on EEG signal processing.

- 1 research paper on EEG-to-image using diffusion model.

- Developed MUSE, a multimodal contrastive learning framework for EEG-based image recognition (22 citations).

- Research on nonstationary EEG dynamics and foundation models for brain-computer interfaces.

- Developed deep learning models for intraoperative surgical gauze detection (17 citations) and surgical smoke evacuation systems.

- Applied YOLO-based object detection for real-time surgical instrument tracking and safety automation.

- Collaborated with researchers and clinicians to identify patterns and trends in brainwave activity related to major depressive disorder.

- Utilized machine learning algorithms to develop predictive models based on real-world brainwave data.

- Searching new possible unconventional superconductors among Co-based quaternary chalcogenides with diamond-like structure CuInCo₂A₄ / AgInCo₂A₄ (A = Te, Se, S).

Projects & Industry

- AI-driven DeFi liquidity positioning protocol with automated market maker (AMM) strategies and backtesting systems.

- Achieved 50%–120% base fee APR on WBTC/USDC and ETH/USDC pairs (Katana on SushiSwap) using time-series model-driven AMM strategies.

- Contributed to Reinforcement Learning from Human Feedback (RLHF) pipelines through high-complexity AI data labeling, preference rankings, and model-behavior assessments for instruction following, multimodal reasoning, and safety alignment.

- Microprocessor IP development for flagship 5G smartphones' display and AMBA SoC implementation.

- Hardware virtualization architecture RTL design and IP verification with UVM and SystemVerilog.

Education

- Advisor: Prof. Dr. Cheng-Ta Li, MD & Prof. Chung-Ping Chen

- Machine learning application on non-stationary time series data.

- The research EEG model for TMS pattern personalized prediction is currently being applied to real outpatient patients in the Precision Depression Intervention Center (PreDIC), Taipei Veterans General Hospital.

- Researching the oxide processing techniques. (Intern at Max Planck Institute)

- Researching the biomedical signal processing on traditional Chinese medicine data. (Advisor: Prof. Sheng-Chieh Huang)

Skills

AI / ML & Deep Learning

LLMs, Agents & RAG

Quantum Machine Learning

MLOps, Cloud & Infrastructure

Quant & Finance Engineering

Programming & Systems

AI-Powered Dev Tools

Languages

Chinese (Native) · English (Fluent — 1.5+ years working at Harvard/BIDMC and OpenAI)

Professional Service

Reviewer for 29 journals (incl. TPAMI, NSR, npj Quantum Information, Scientific Reports, JBHI, IEEE IoT Journal, ACM TORS), 5 top conferences (NeurIPS / ICML / KDD / MICCAI / ICASSP 2026), and Program Committee of QNRL Workshop @ IEEE WCCI 2026.

Program Committee

Conference Reviewer

NeurIPS 2026, ICML 2026 (Gold Reviewer — top tier), KDD 2026, MICCAI 2026, ICASSP 2026

Journal Reviewer (2025–present)

- Oxford University Press National Science Review (NSR)

- IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)

- IEEE Transactions on Neural Networks and Learning Systems (TNNLS)

- IEEE Transactions on Neural Systems & Rehabilitation Engineering (TNSRE)

- IEEE Transactions on Systems, Man and Cybernetics: Systems (TSMC)

- IEEE Transactions on Human-Machine Systems (THMS)

- IEEE Internet of Things Journal (IoT-J)

- IEEE Transactions on Consumer Electronics (TCE)

- IEEE/ACM Transactions on Audio, Speech, and Language Processing (TASLP)

- IEEE Transactions on Cognitive and Developmental Systems (TCDS)

- IEEE Journal of Biomedical and Health Informatics (JBHI)

- BMC Medical Informatics and Decision Making

- IEEE Signal Processing Letters (SPL)

- IEEE Access

- ACM Transactions on Recommender Systems (TORS)

- Tsinghua Science and Technology

- npj Quantum Information

- Scientific Reports (Nature Portfolio)

- EPJ Quantum Technology

- IOP Journal of Neural Engineering

- Taylor & Francis Behaviour & Information Technology (BIT)

- Springer International Journal of Machine Learning and Cybernetics

- Springer Cognitive Neurodynamics

- Springer Journal of Bionic Engineering

- IOP Biomedical Physics & Engineering Express

- IOP Engineering Research Express

- AIMS Quantitative Finance and Economics (QFE)

- AIMS Public Health

- IntechOpen AI, Computer Science and Robotics Technology (ACRT)

Teaching

- Guided undergraduate students through wet-lab experiments: Biological Safety Cabinet operation, E. coli transformation, PCR, gel electrophoresis, and plasmid purification.

- Designed lab protocols and assessment rubrics; held weekly office hours and one-on-one troubleshooting sessions.

Invited Talks & Presentations

Awards & Achievements

- 2026: ICML 2026 Gold Reviewer — recognized among the top reviewers based on area chair ratings.

- 2026: IOP Trusted Reviewer — recognized by the Institute of Physics Publishing for quality and commitment in peer review.

- 2026: NVIDIA GTC 2026 Top 8 Finalist — "GPU-Accelerated Chinese Brain-to-Text Interface Powered by NVIDIA H100 80GB."

- 2026: Berkeley RDI AgentX-AgentBeats Phase 1: Healthcare Agent 3rd Place (Team MadGAA, 1,300+ teams worldwide). [Project]

- 2023: Certificate of the 20th National Innovation Award, clinical research category.

- 2019: International Blockchain Olympiad (IBCOL): World Finalist competition (100+ teams).

- 2017: Microsoft International Imagine Cup: 2nd place in Taiwan.

- 2016: International Genetically Engineered Machine Competition (iGEM): World Gold medal, Best Applied Design, Best Part Collection, Best Presentation (300+ university teams).